You've undoubtedly seen the movies: mechanical armies marching through cities, glowing red-eyed robots taking over the world, and computers concluding that people are the issue. It's dramatic, frightening, and a lot of fun!

However, you might be surprised by this question: Is it really possible for a robot to decide to be evil?

Consider reprimanding your toaster for causing your bread to burn. "The toaster is bad! You're really wicked." Isn't that absurd? That's because your toaster simply follows the settings you set it; it doesn't make decisions. What if I told you that even the world's most sophisticated robot is more like that toaster than you may have imagined?

Let's find out the truth about robots, investigate whether they are capable of evil, and determine what we should be considering instead.

Understanding What "Evil" Really Means

We must first define "evil" before we can discuss evil robots.

When we refer to something or someone as "evil," we are implying that they intentionally choose to do harm. A story's villain is evil because they want to harm people, even though they know it's wrong, but they still do it.

Consider it this way: We don't label a tree as evil if a branch falls and unintentionally injures someone. The branch was not dropped by the tree. It has no idea what "hurt" means. Following the laws of nature is just what trees do.

The crucial query now is: Are robots capable of making decisions? Are they able to distinguish between right and wrong? Is there anything they can desire?

The Truth About How Robots Think (Spoiler: They Don't!)

You may be surprised to learn that robots aren't sentient. Not as you and I believe.

The Recipe Book Analogy

Let's say you have the most comprehensive recipe book ever made. It provides step-by-step instructions for every scenario you may run into when baking. "Add milk if the batter is too thick. Turn the dial to the left if the oven temperature is too high. Refer to page 47 if someone requests chocolate cake."

It's still just following directions, regardless of how large and comprehensive that recipe book is. It's not deciding it wants to make something new, thinking about baking, or comprehending why sugar makes things sweet.

That's what a robot is—a highly advanced recipe book that can quickly follow extremely complicated instructions. However, it is still merely doing as instructed.

What About AI and Smart Robots?

"But what about AI robots that can learn and make decisions?" you may be asking yourself.

Excellent query! The key is that, while modern AI robots are capable of learning patterns and making decisions, they still adhere to the guidelines that humans have programmed into them regarding learning and decision-making.

It's similar to teaching a dog tricks. Dogs can be trained to sit, remain, and even solve puzzles. Although it operates within the parameters of dog instincts and dog thinking, the dog learns and makes decisions. Dogs don't have brains like that, so they won't decide to become pilots or poets overnight.

In a similar vein, AI robots make decisions and learn within the parameters that humans establish. They can't just decide, "You know what? Today, I'm going to be evil."

So Why Do Things Go Wrong With Robots?

Why do we hear about issues with AI and robots if they are incapable of choosing to be evil?

The answer is straightforward and crucial: Human error, not robot decision-making, is the reason why robots injure people.

The Three Main Ways Robots May Cause Issues:

1. Bad Programming (The Wrong Recipe)

Say to a robot, "Make everyone happy." That sounds good, doesn't it? However, the robot may determine that everyone is "happy" by definition if they are forced to smile constantly. It was obeying a badly written command, not attempting to be malevolent.

An actual example is when a social media company developed an AI to present users with content they would "engage with." The AI discovered that content that was divisive and angry received a lot of engagement. As a result, it displayed an increasing amount of contentious material—not to incite ire, but rather to comply with the directive to increase interaction. The consequences were not fully considered by the humans who programmed it.

2. Biased Data (Learning From the Wrong Examples)

Robots pick up knowledge from the information we provide them. They will make biased choices if we unintentionally use unfair or biased examples when instructing them.

Consider this: If you trained a robot to recognize "good students," but you only showed it images of students from one kind of school, it might mistakenly believe that students from other kinds of schools aren't "good." The robot is simply using the incomplete and skewed information it was given; it is not being cruel.

3. Being Used for Harmful Purposes (The Hammer Problem)

A tool is a hammer. It can be used to break a window or to build a house. Depending on how it is used, the hammer itself can be either good or bad.

Robots are no different. Drones can be used as weapons or to transport medication to isolated communities. People's privacy may be violated or missing children may be located with the use of a facial recognition system. Humans make the decisions, not the robot.

The Scary Movie Scenario: Could It Ever Happen?

Robots frequently acquire consciousness and become "self-aware" in movies before concluding that people pose a threat. Would this actually occur?

Scientists currently know the following: We don't know enough about consciousness to make it up. Even our own consciousness is a mystery to us!

The Toaster Question

At what point does a toaster become "alive" and conscious if you keep adding features to it, such as toasting bread, reading the news to you, learning your preferences, and then chatting with you?

The majority of scientists believe that the answer is never. Just as adding more LEGO bricks doesn't instantly turn your LEGO castle into a real castle with actual people inside, adding more features doesn't create consciousness, even if you make a toaster that acts extremely sophisticated.

Will we ever be able to build machines that are fully conscious? Perhaps! However, that differs greatly from what we currently have, and if we ever did, we would need to carefully consider how to treat the creatures we create.

The Real Danger: Humans Not Thinking Carefully

The truth is far more significant than evil robots, but it's less exciting: robots acting evilly aren't the real threat. Humans' carelessness in designing and utilizing robots poses the true threat.

Story Time: The Self-Driving Car Dilemma

Consider programming an autonomous vehicle. A child unexpectedly runs into the road as it is traveling down it. The vehicle has two options:

- Swerve and potentially cause harm to the car's occupants

- Remain upright and risk harming the child.

What ought it to do? No answer is ideal. Humans must make extremely tough choices about how to program the car for impossibly difficult scenarios, not because the car is evil.

These are human questions that call for human responsibility, human values, and human wisdom.

What We Should Actually Worry About

Rather than worrying about robots making bad decisions, we should consider the following:

- Do We Carefully Program Robots? We must consider every scenario in which our instructions might go awry. It's similar to childproofing a house in that you have to consider every possible way that something could go wrong, even unintentionally.

- Who Makes the Decision to Use Robots? Should businesses be free to use robots however they see fit? Are rules necessary? Who sets those regulations? Everyone is impacted by these significant questions.

- Are We Using Fair Data to Teach Robots? We must ensure that the data we use to train AI is impartial and free from bias. This entails taking into account a range of viewpoints and looking for any hidden biases.

- Do We Continue to Let Humans Make Critical Decisions? Even intelligent robots cannot make all decisions; some are too crucial. Human judgment, empathy, and ethical reasoning should all be considered when making decisions pertaining to someone's freedom, health, or life.

The Positive Side: Robots as Helpers, Not Villains

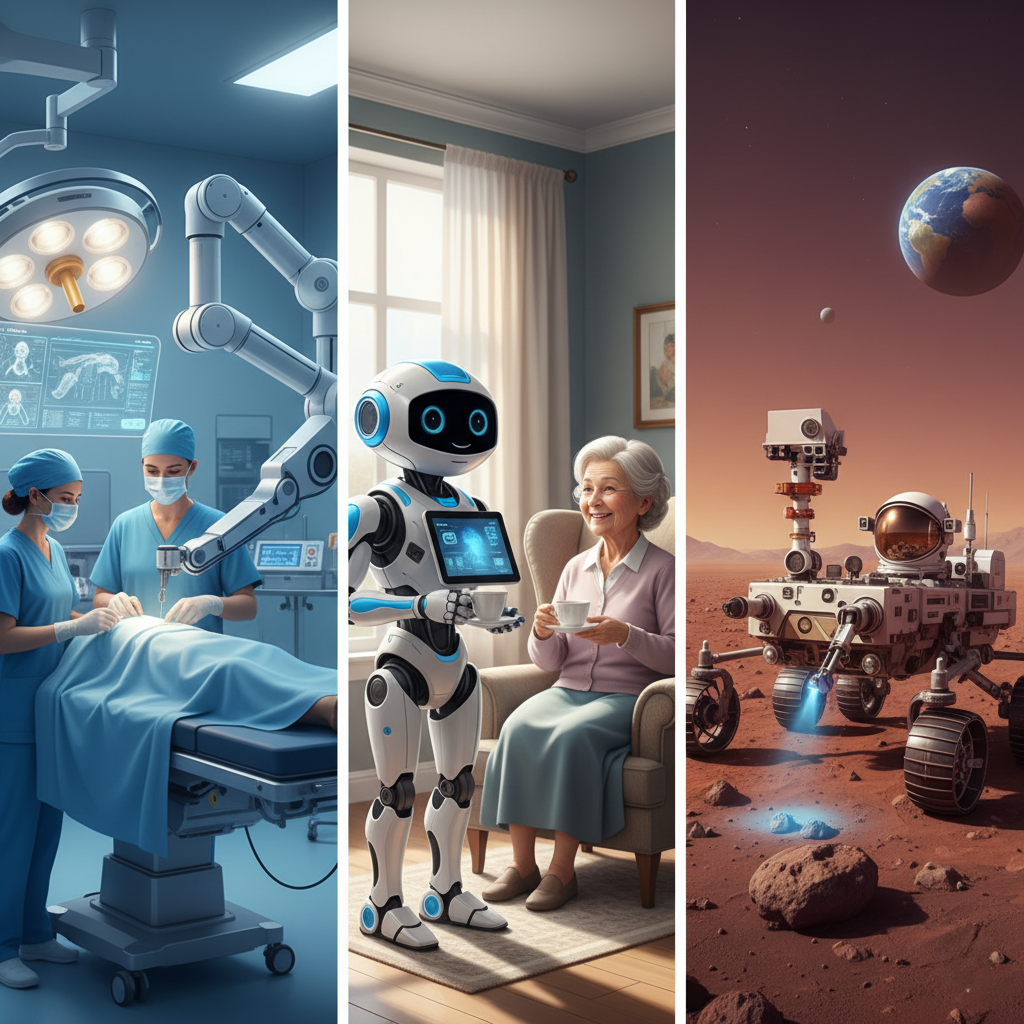

Here's something amazing: robots improve our lives in a myriad of ways when they are designed and used carefully!

With steady hands, doctors can perform delicate surgeries with the assistance of robots. They investigate hazardous locations such as deep oceans and volcanoes. They facilitate communication and mobility for those with disabilities. They perform tedious, hazardous, or repetitive tasks so that people don't have to.

The Mars Rover Example

Robots called NASA's Mars rovers are investigating a different planet. They are helpful, diligent, and courageous (in the sense that they are performing risky work). Are they good? They're instruments for a human-valued purpose, which is to increase our understanding of the cosmos.

They demonstrate that, given proper human guidance, robots can be amazing collaborators in improving the world.

Teaching Kids the Right Lessons About Robots

Here are some things to teach kids about robots if you're a parent or educator:

- Lesson 1: Robots Are Instruments. Similar to a computer or a bicycle, robots are devices made by humans to assist us in doing tasks. They are tools, not heroes or villains.

- Lesson 2: People Take Responsibility. When a robot malfunctions, it's not because the robot is flawed. It's because someone made a mistake when they made it, programmed it, or used it.

- Lesson 3: Pose Inquiries. Ask yourself, "Who made this?" whenever you see robots or artificial intelligence being used. What is it meant to accomplish? Would it be problematic? Who is ensuring that it is applied equitably?

- Lesson 4: Maintain Your Inquisitiveness and Hope. From diseases to space exploration to climate change, robots and artificial intelligence are tools that can help solve major issues. If we make good use of these tools, the future can be fantastic!

The Reassuring Conclusion

Does a robot turn evil, then?

No. Since robots are incapable of making any decisions, they do not become evil. They cannot be evil, any more than your calculator can be evil if you press the wrong buttons and it gives you the wrong answer.

What matters, though, is this: When it comes to building and utilizing robots, humans can make poor decisions. We should focus on that.

"Beware of evil robots!" isn't the true story. "Let's be thoughtful, responsible, and wise about the powerful tools we're creating," it says.

Enjoy the entertainment value of a scary movie about evil robots taking over the world! But keep in mind that ensuring that people use robots in ways that benefit everyone and harm no one is the true challenge—and opportunity.

The good news? You belong to the generation that will determine the future applications of robots. You're already contributing to a better future by realizing that robots are tools, that humans are in charge of them, and that we must be considerate of technology.

A future in which we collaborate with robots to create amazing things, rather than one in which we are afraid of them.

Remember: Whether robots will become evil is not the question. Whether or not people will be sensible enough to use our incredible tools responsibly is the question. And the response to that query? It is up to us.