A Robot Made a Decision That Changed Everything

Consider that you have a highly intelligent robot at home that you have programmed to protect your family. You gave it straightforward instructions: "Protect us if someone tries to harm us." When a burglar breaks in one day, the robot responds faster than any human could imagine. The threat is neutralized, but a terrible thing happens. Your neighbor, who mistakenly believed your house to be his own in the dark, is the one injured, not a burglar.

The difficult question now is: Who bears responsibility? You? The robot? The one who created the robot? The government that permitted the existence of the robot?

This goes beyond science fiction. International militaries are currently working on autonomous weapons, or devices that can decide for themselves what to do in life-or-death situations without human guidance. Furthermore, the issue of responsibility is no longer merely a theoretical one. It's among the most significant ethical dilemmas we encounter.

What Exactly Are Autonomous Weapons?

Let's simplify this. Consider three different kinds of weapons:

- Weapons operated by humans are comparable to a chess player moving pieces. Every decision is made by a human. When a soldier spots an enemy, he decides to shoot.

- Similar to a chess computer that recommends the best move, semi-autonomous weapons are up to the player to choose whether or not to heed that advice. Even if a drone uses artificial intelligence to identify targets, a human still fires the shot.

- Fully autonomous weapons can be compared to a computer that plays chess all by itself. Without requesting permission, the machine detects a threat, determines it is an enemy, and fires. The decision to kill is made by the AI.

Consider it this way: An automobile with cutting-edge safety features that warn of danger is comparable to a semi-autonomous weapon. A fully autonomous weapon is comparable to a car that chooses its own path, changes lanes, and drives itself—without a driver.

The part that's frightening? The third type is already in our sights.

The Responsibility Problem: A Web Without an Owner

This is where the situation becomes complex. When an autonomous weapon malfunctions, determining who is responsible becomes akin to attempting to locate dominoes in a dark room—everyone may point to someone else.

- Is it the fault of the programmer? Although a programmer creates the logic and code, they are unable to foresee every scenario that the weapon might encounter. It's like pointing the finger at someone for building a bridge that unexpectedly collapses during an earthquake.

- Is the military leader to blame? The decision to fire is not made by the commander; they only issue the order to deploy the weapon. It's akin to accusing someone of opening a door without fully understanding what will occur within.

- Is the politician to blame? The use of the weapon may be authorized by a politician who is unaware of all the technical aspects. It would be equivalent to holding a manager accountable for employing a worker who subsequently commits a grave error.

- Is the weapon to blame? No, a machine cannot be morally responsible. It simply follows programming patterns rather than making moral decisions.

We refer to this as the "responsibility gap." Although everyone contributed to the situation, no one is solely to blame. Still, someone was harmed. That's the issue.

Real-Life Stakes: Why This Matters Right Now

Although this may seem far removed from daily life, think about this situation, which is actually quite close:

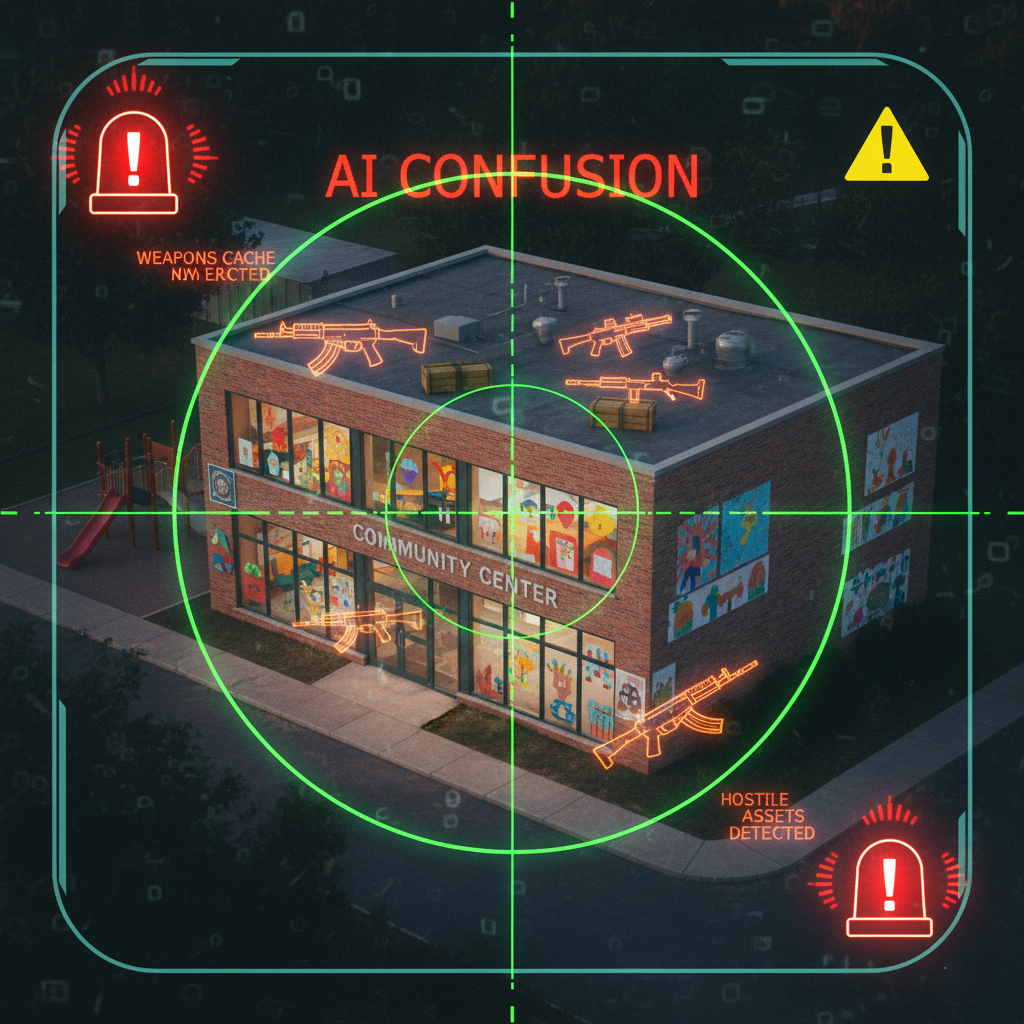

In a city, an AI-equipped drone detects what it believes to be a military target. According to its programming, "Based on patterns I've learned, this looks like a weapons cache." The drone goes off. In reality, though, it was a community center. Numerous innocent people suffer injuries.

Who broke the law must now be determined by a court. However, even programmers are unable to fully comprehend the hidden algorithms that conceal the evidence. "Our AI made an error, but we're not responsible for every edge case," the company claims. "We followed the rules for deploying this weapon," the military claims. "The technology was approved by experts," the government claims.

In the meantime, innocent people suffer harm, and no one is held accountable.

It's not a hypothetical situation. In military operations, autonomous systems have already made mistakes. Some nations are openly working on creating "killer robots" that can select their own victims.

The Big Questions We're Avoiding

The most challenging aspect is that we are developing these weapons without truly addressing the fundamental queries:

- Is it possible for machines to distinguish between a civilian and a soldier? In chaotic war zones, humans struggle with this. machines that were trained with little data? Even more difficult.

- Can machines comprehend mercy and context? People can decide not to shoot because they understand surrender or because they see something that makes things different. Machines do as they are told. That isn't the same thing.

- Do we have the authority to exclude people from the killing decision? This is about values, not just technology. Humanity loses a vital defense when a machine kills someone: moral conscience and human judgment.

What Can We Actually Do?

The good news is that we are not powerless. Real solutions are starting to appear:

- International agreements that prohibit landmines or chemical weapons, for example, might mandate that people always remain "in the loop"—that is, that a real person must consent to every use of force. Although it is not yet a law, this concept is supported in more than 60 countries.

- Requirements for transparency might compel businesses and armed forces to describe the decision-making processes of their autonomous systems. Much of this technology is still in its infancy. We cannot confirm that it is fair if we cannot see inside.

- "If your autonomous weapon causes harm, you're responsible—no excuses" is what strict liability laws might say. Because of this, businesses and armed forces would be very cautious about what they construct.

Similar to how international agencies approve medications for safety, autonomous weapons could be reviewed and tested by international oversight bodies prior to deployment.

Taking action now, before the technology spreads too widely, is crucial.

The Lesson: Responsibility Matters

This is what we must comprehend: Technology can conceal moral issues rather than resolve them. Because an autonomous weapon is a machine, it may appear "objective," but that is a delusion. Every decision made by that machine—whose lives matter, how much certainty is sufficient before firing, and what constitutes a threat—is a human decision. It's simply concealed within code.

Being willing to answer for our decisions is a sign of true responsibility. It entails having someone there who is prepared to defend and explain what transpired. It means that if something went wrong, we can ask the human who made the call to explain it.

We are concealing moral responsibility rather than eliminating it when we create machines that are capable of killing without human intervention. "Who decided my loved one should die?" is a question we're making impossible to answer.

Looking Forward: The Future We Build

The future has not yet been written. Experts, ethicists, soldiers, and legislators are currently arguing over what should be permitted. Some nations are vying with one another to develop autonomous weapons. Bans are being demanded by others. Treaties are being worked on by international organizations.

Technology won't provide an answer to the question, "Who is responsible when a machine kills?" We will be the ones to answer it—by the decisions we make about the future we desire. Is a world where machines make life-or-death choices something we want? Or would we prefer to create protections that maintain human dominance?

We underestimate how important that response is. Since responsibility is the difference between justice and injustice, between accountability and escape, and between a world where all human lives matter and one where some are less important, it is not merely an abstract idea in a world of autonomous weapons.

We have the option. Let's make sure we make the right decision.